In a sense they're the opposite of announces, or at least an alternative to them.) (Especially when it's scrape lag, something that would have nothing to do with "concurrent announce tasks", because scrapes are precisely not announces. I definitely don't buy (based on how the lag is manifesting) that it has anything to do with concurrency, despite what that error dialog suggests. If the changes had NO effect, then it means I'm on the wrong track with that, and there must be some other cause entirely that we'll have to try to understand. I hope to see that turning off inactive scrapes and lowering connection timeouts helped somewhat. But the way to evaluate the effects of any changes would be to look for a drop in the lag shown on that graph. If there has been improvement, then at least we're moving things in the right direction and can try to improve them more. (I'm going to assume a faster machine as well, because I'm running BiglyBT on a 2-core Athlon 64 box from like 2007.) Which is why I was hoping the options changes would at least improve the lag stats. Now, you have much higher activity levels in BiglyBT than I do, but you also have a much better connection.

On my system, no lag ever seems to register there under any circumstances. In your previous screenshot, it showed quite a bit of lag on Scrape attempts. What do the Tracker graphs look like, with the updated settings? That's the trigger for that message, ultimately: The lines on the lower-left "Update Lag" graph rising too high. The message probably needs to be changed, the assumption that lag issues are caused by a lack of concurrency is clearly incorrect. So the system is still asking me to increase the concurrency. Though I am currently far from using my 500/500 Fibre to a high load.

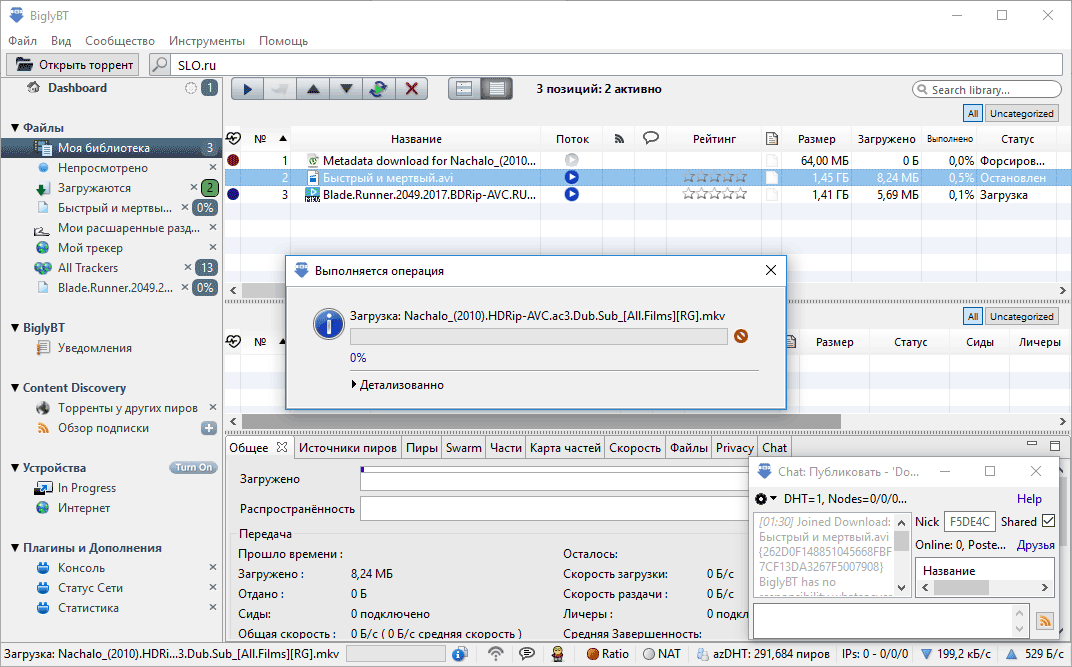

My goal with my system is to Seed as much as possible as fast as possible. And at 128 I still have it higher than the 25 you suggested. And you can see the Distributed Database show 462k users. The Network Meter has higher numbers as it shows Overhead and other traffic. Reported in both the program, and on my Network Meter gadget. And the fun part is I have 500/500 Fibre, and using a program that can download video from various sites, that I just got. So now upload performance has dropped and has a range is 20 Mbit/s - 70 Mbit/s. Those trackers are showing as Error: Offline (No data received) or Error: Failed (Connection timed out: connect). In the last few months, in spite of new torrents on RARBG containing usually only their own Trackers. I have seen BiglyBT reach upload speeds over 250 Mbit/s upload, though more typical is 120-160 Mbit/s. Oh and give you an idea of how much I am seeding and queue settings: But it still tells me to increase the concurrency. Notice I didn't go all the way down to 25, I used 128, down from the 1024 before. Tried the numbers you suggested, here is what it looks like: (Along with not scraping inactive torrents.) My stats graphs always look about like Ok, I am getting this again.

Personally, I use 15 seconds for the connect timeout, 35s for the read timeout, and a concurrency of 25. Short timeouts ensure that busy trackers or ones that are having network connectivity issues can be treated the same as offline trackers, and passed over in favor of more responsive ones. The whole point of having multiple trackers for each torrent is that connecting to any one of them is unimportant - there's always the next one on the list. I'd probably drop that way, way down - if a tracker isn't able to respond to a connection attempt within a few seconds, even if the connection would eventually succeed, it's probably so overloaded (or having other issues) that you're better off just bailing on it and moving to the next one in the list. With that kind of window, every attempt to contact an unresponsive tracker is going to potentially delay a subsequent connection to a working tracker by up to 120 seconds. "Manage tracker activation to reduce load" could also possibly help (though I don't know if that will affect scraping at all).Īlso possibly related: Two minutes is an incredibly long connect timeout. If you have a significant number of inactive torrents (and it seems you do, specifically all of the torrents in various "Queued" states), there's not a lot of value to scraping them all. Peer connection closed: failed to establish outgoing connection: Connection attempt to :55748 aborted: timed out after 15sec | Download: 'filename' Peer: L: 'd probably start with turning off "Scrape torrents that aren't running", personally. I copied all the messages pertaining to one particular IP : I'm not sure what to look for in that Help Menu section.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed